Hi Team,

I am doing Fundamentals of DataOps.live training and as part of this did setup for mentioned runner in this course.

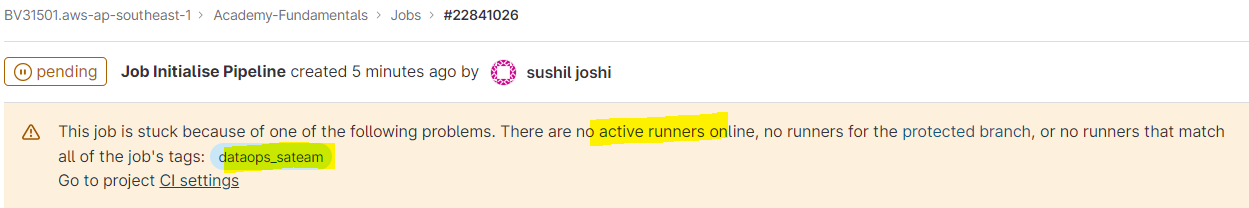

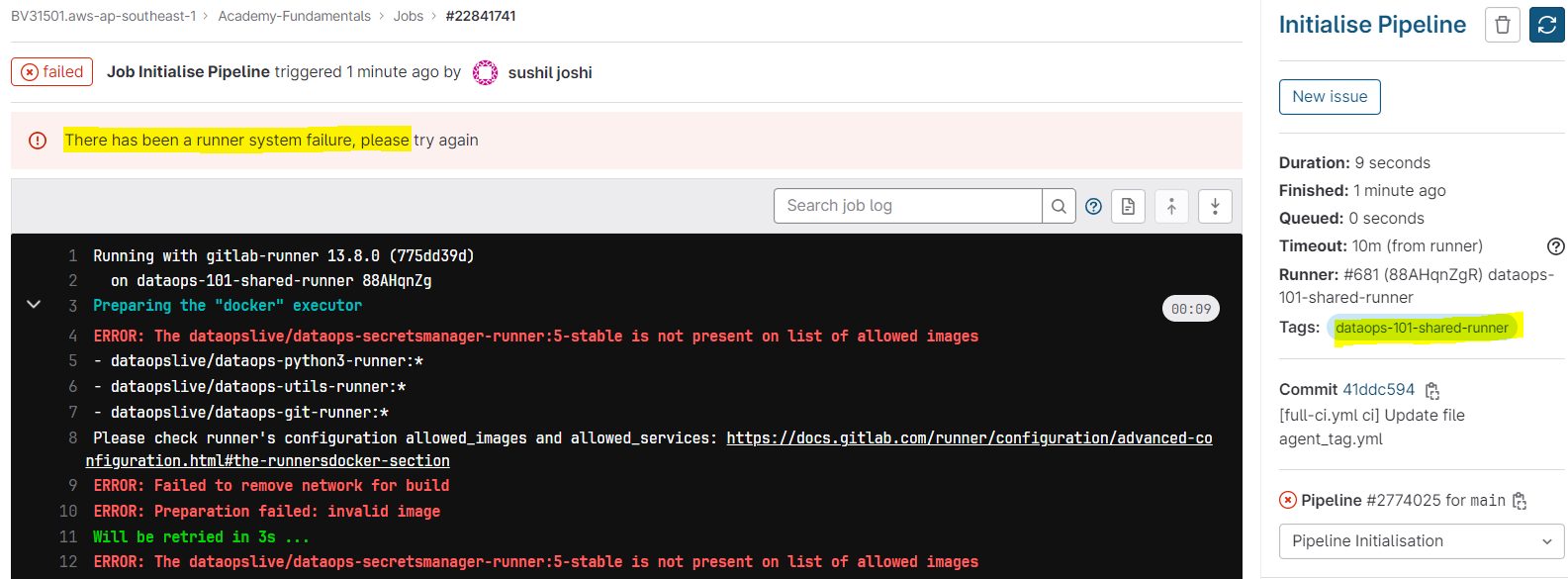

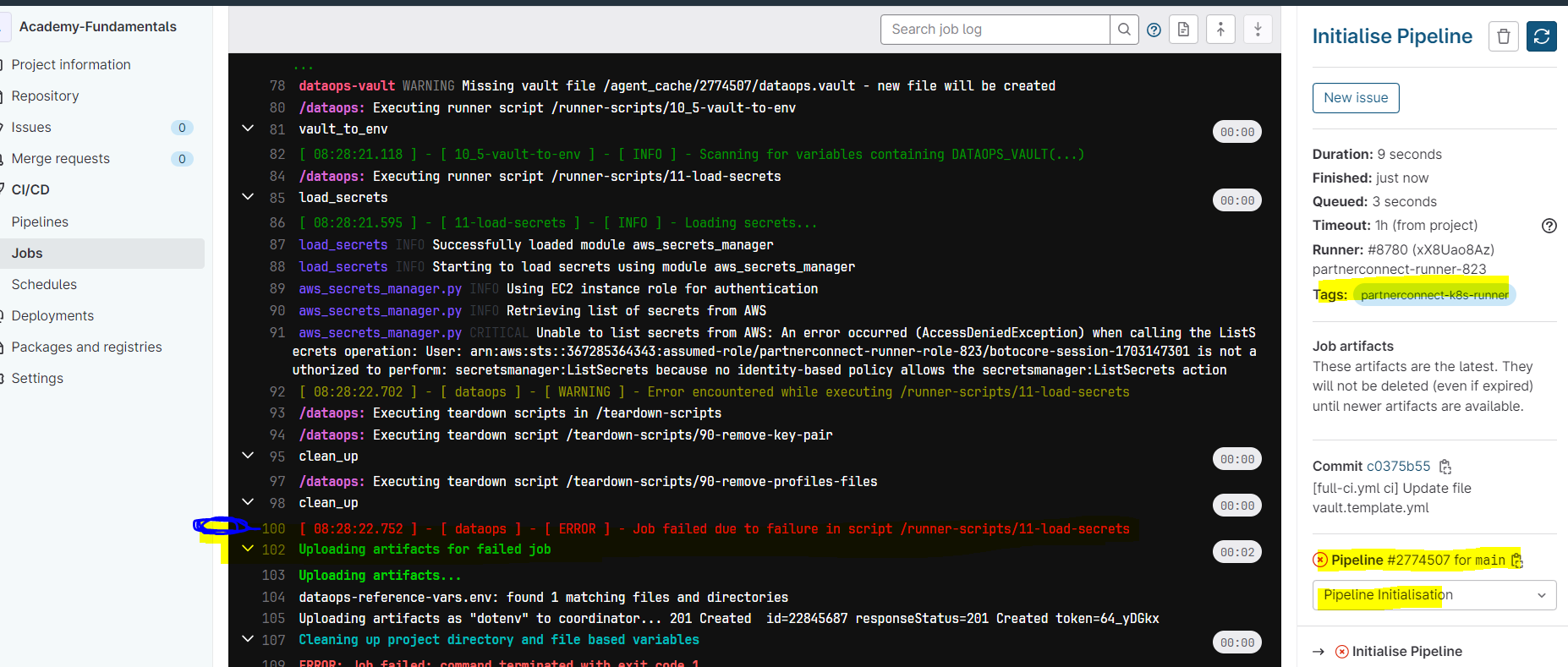

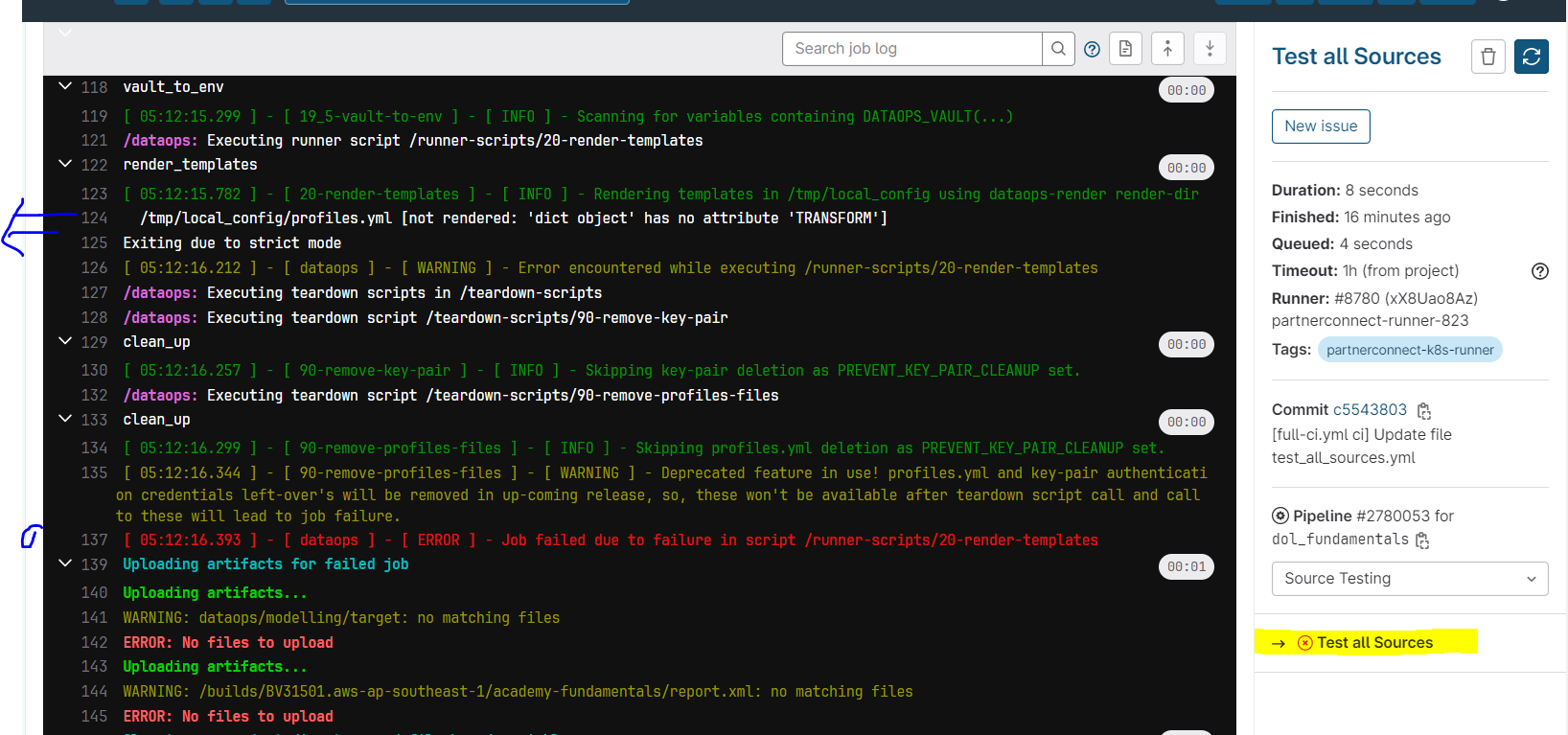

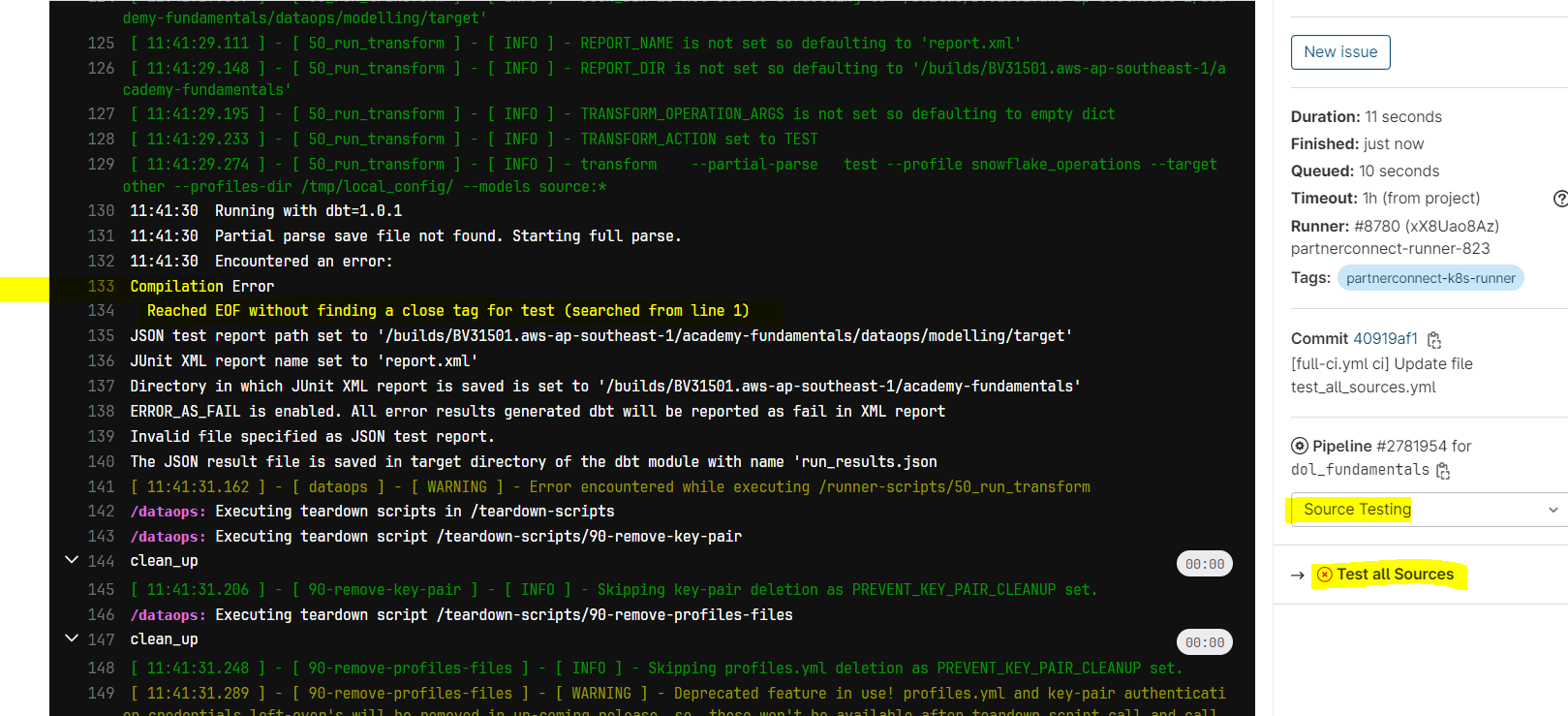

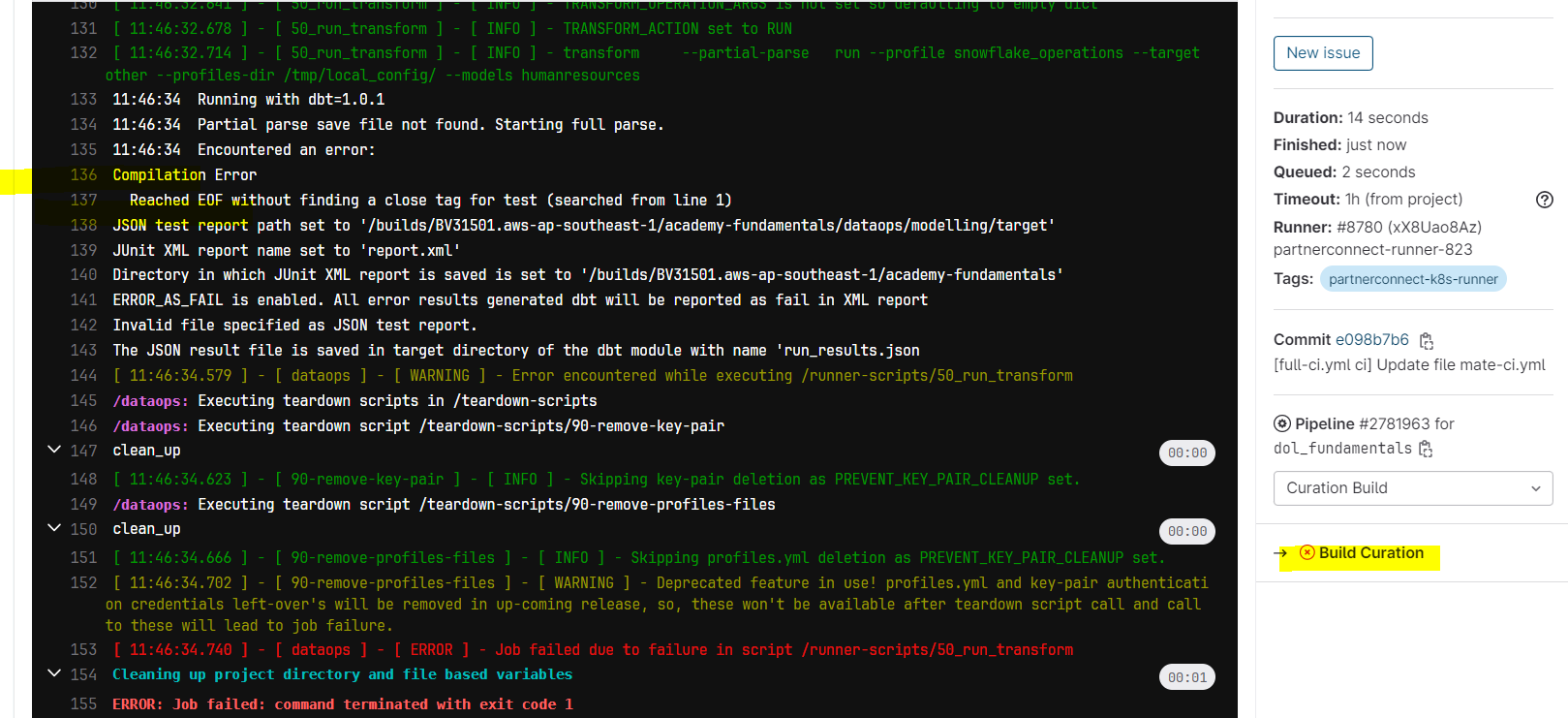

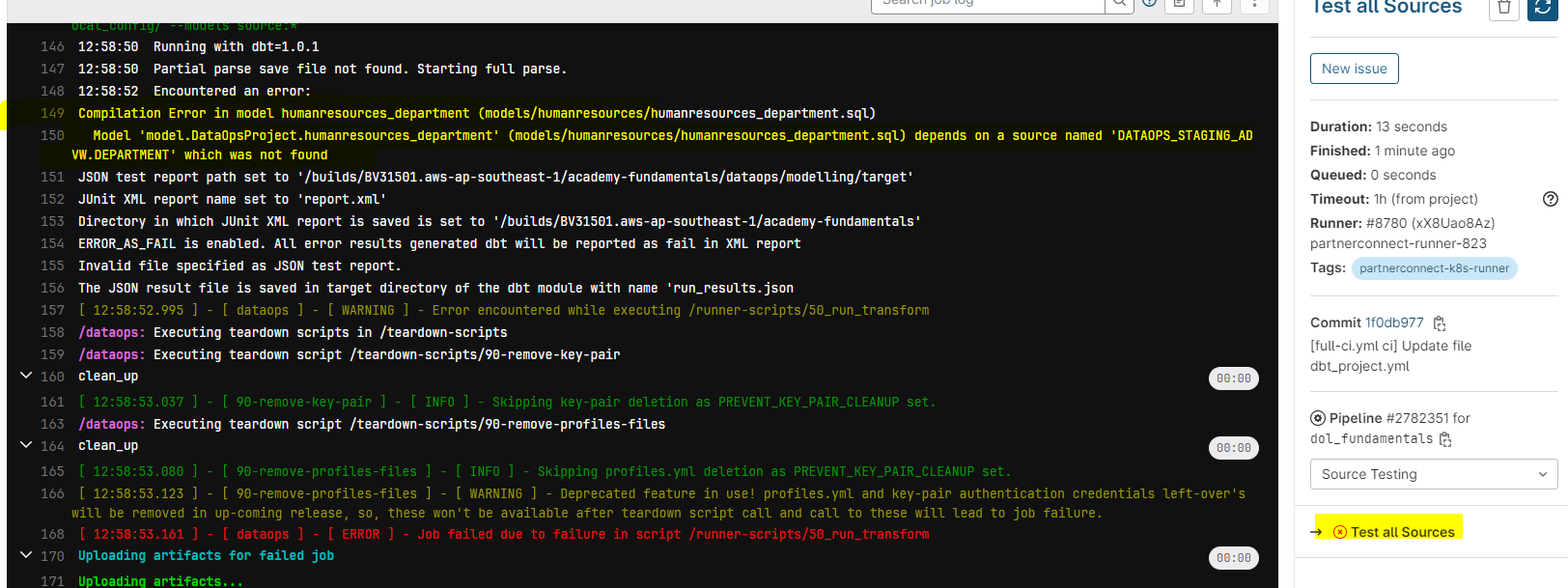

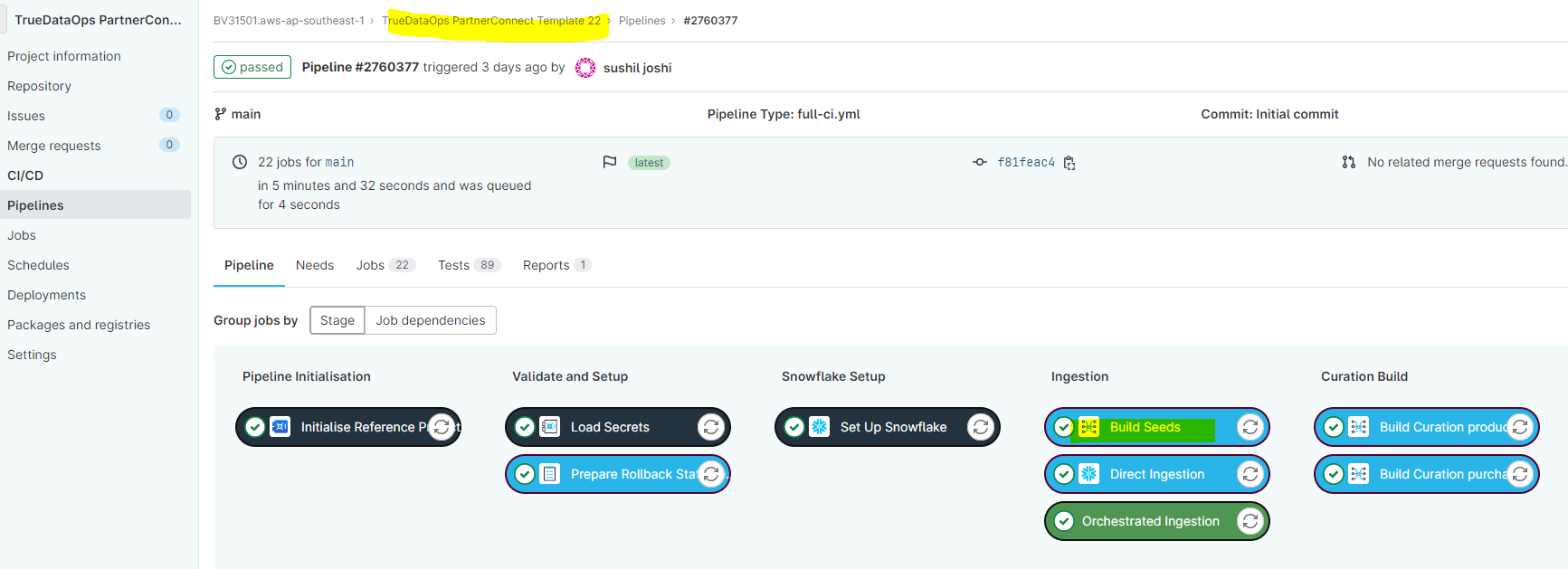

However when I am running pipeline its giving below message-

Please help to resolve this issue to proceed further with this course

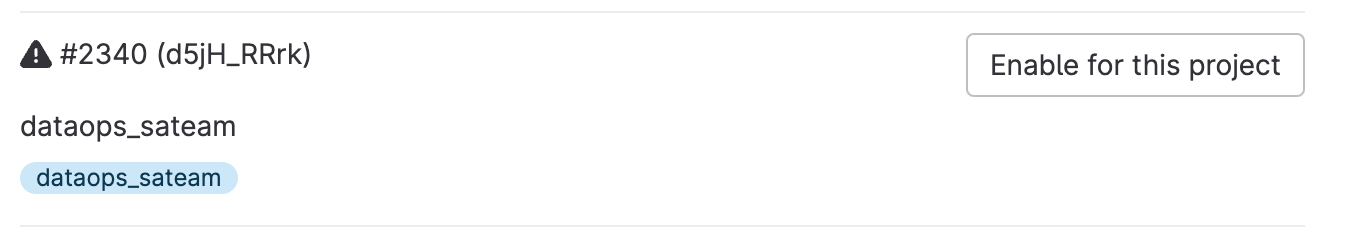

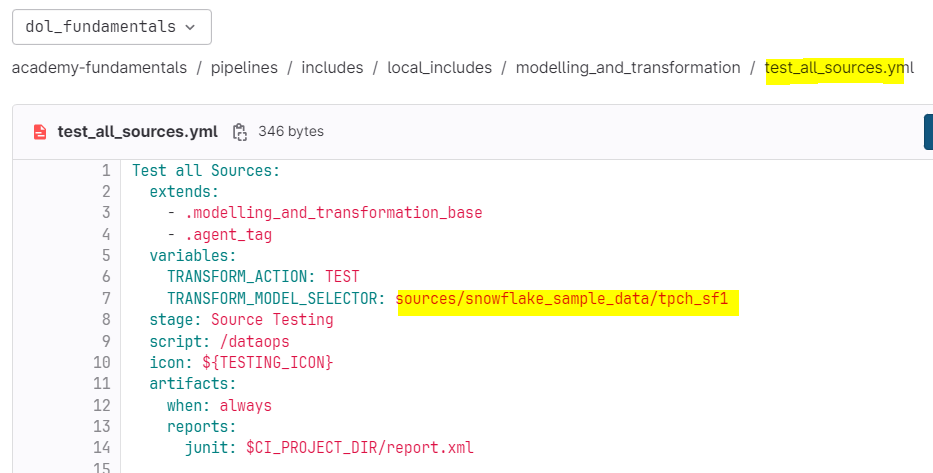

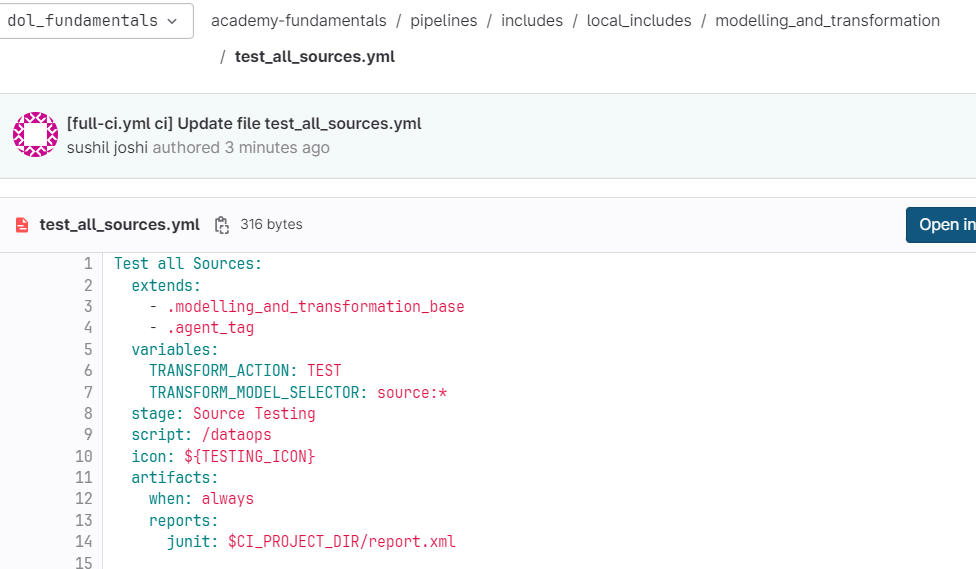

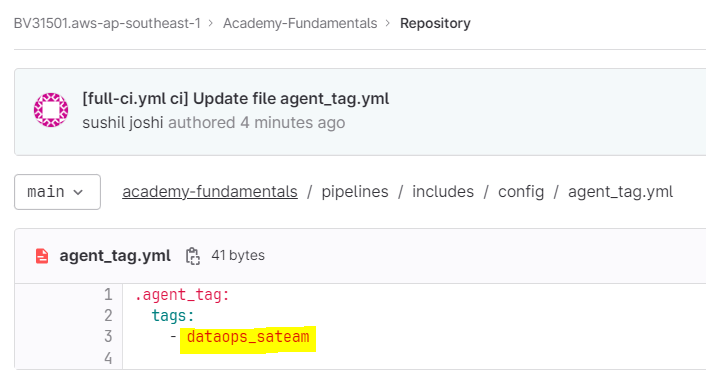

config updated as below

message getting when trying to run pipeline